Our platform

The most reliable automation solutions platform

Analytics Platform

- Permissions based platform to accommodate variety of users

- User engagement metrics to help drive adoption

- Detailed reports for performance review and site management

- Analytics charting for root cause investigation

- Client success and field support

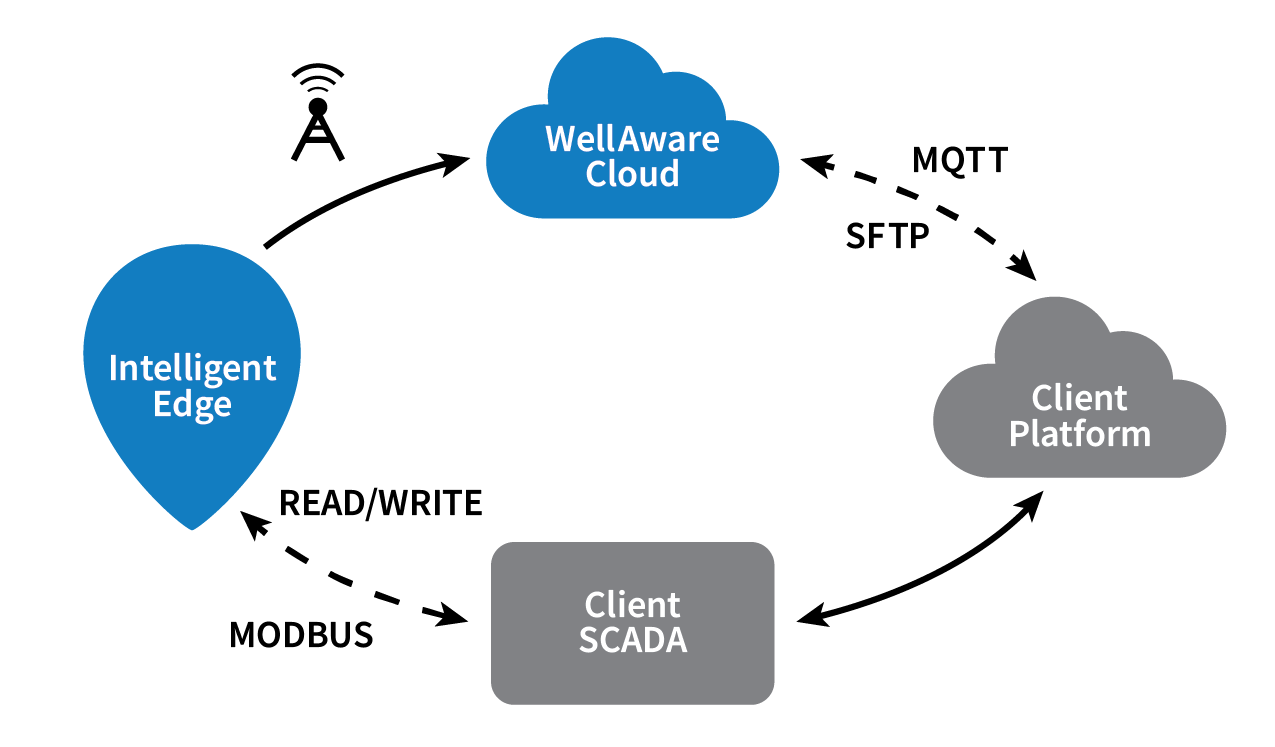

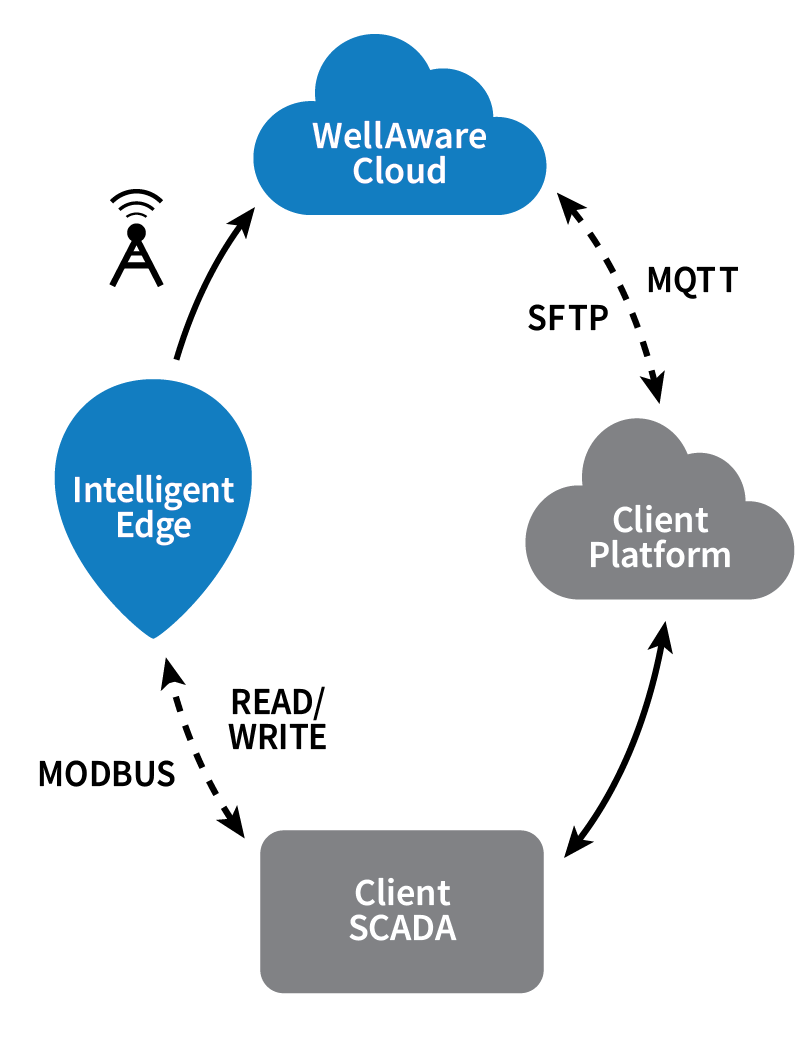

Advanced Communications

- Integrated bi-directional communications for remote support

- Multi-carrier cellular communications to accommodate remote deployments

- Bluetooth technology for device management and diagnostics

- SCADA, MQTT, and Cloud integration supported

- Integrated bi-directional communications for over the air updates and remote support

- Store and forward capability ensures no data is lost in the event of cellular outage

Intelligent Edge

- Class I Division II certified controller with intrinsically safe sensor

- Sensor accuracy +/- 0.5% full scale

- Proprietary automatic pump calibration ensures accurate injection variance

- Limited lifetime warranty

- Power anomaly detection predicts power failures before they occur

Technology designed, engineered and manufactured in the USA 🇺🇸

In-house engineering and technology team offering internal control of entire solution

Reliable bi-directional communications with optional SCADA, MQTT and cloud integration

Over-the-air (OTA) software updates address customer needs and evolving technology

The pump and power agnostic solution in the field

C1D2 and harsh and hazardous certification

Multi-carrier cellular connectivity options and up to 5 year battery life

Engineered to drive value

Increase

Revenue

- Reduce Injection Variance

- Maximize Pump Uptime

- Maximize Production

- Lower Account Churn

Improve

Safety

- Reduce Vehicle Miles

- Improve TRIR

- Reduce Risk Exposure

- Attract & Retain Talent

Maximize

Efficiency

- Optimize Personnel

- Lower Fuel, Vehicle & Equipment Costs

- Reduce MRO Expense

- Faster Response Time

Enhance

Sustainability

- Reduce Emissions

- Reduce Chemical Waste

- Reduce Spills

Proven platform with data integration options

Common sense pricing

CAPEX or OPEX. It’s your choice. Our flexible pricing options give you high-quality data streams and insights to complement any financial model. Don’t invest in an alternative without checking with WellAware

We want to hear from you

Contact us to learn more about starting your digital transformation, evaluating your current asset management system, or to get in touch with with our customer success team.

Corporate Headquarters

3424 Paesanos Parkway, Suite 200

San Antonio, Texas 78231

Direct Contact

Toll Free: (855) WELLAWAREUS

(210) 816-4600

sales@wellaware.us

Customer Support

(210) 816-4600, ext. 2

support@wellaware.us

Better Data, Better ResultsTM